I am wondering to what extend it has been verified that even with the blobless and open source firm/software the phone does not send data through build in hardware features.

Or even if the hardware does not send any data, how far can a build in backdoor inside the soc been tested/verified?

No, it doesn’t imply that, but Purism pursues multiple goals in their products.

Privacy/Security - is enhanced by isolation. What you don’t control - does not live in your house (memory/cpu).

Freedom (from vendors) is enhanced by deblobing. Device must just work without any ritual offerings and sacrifices.

You might be interested in this video

This is known, but this is on os level, this would be probably the same without the effort of blobless

Could be  I’m not sure how they measured it.

I’m not sure how they measured it.

I would configure a router to capture what is coming from each device. I have seen videos that measured specifically Android sends out using a router.

Also, this is the dev kit. I don’t know if that makes a difference.

While almost impossible to prove/guarantee that no such backdoor exists, it is both unlikely and hard to sensibly make use of. As the CPU knows nothing about the modem or other peripherals, how should it exfiltrate the data it processes? In the L5, it is the OS level that connects these things together.

The plot would have to go something like this:

NSA HQ: “Ok, we obtained the schematics of the L5 and which modems they intend to use and how they are connected. Now lets force NXP to embed a specialized backdoor for this scenario.” “Uh… NXP claims the hidden 100MB ROM is already full with the backdoors for the other devices built from it…”

(oh, and you don’t want to have any revealing bugs in those hidden backdoor OSes…)

Likewise, the modem firmware might contain a backdoor, but there is not a lot of useful stuff it can do. As it has no access to RAM, it basically knows the same things as the tower it is connected to.

Classic case of where the infrastructure boundaries lay. Is the phone yours or “theirs”? With practically all smartphones nowadays, there boundary is all the way up your ear - the phone is “theirs”. “They” control it. They might allow you some configuration options but, since they are on “their” territory, “they” override them whenever “they” want.

Custom ROMs don’t do much about it. They are just excursions into “their” territory, tolerated by “them”, perhaps only for PR reasons, who knows. The whole territory is still “theirs”. With everything integrated into one chip, I would not be surprised if it turns out that the modem is the main CPU that controls everything, and the OS and applications are just run in some kind of a slave container.

Purism pushes the boundary one bit - with the Librem 5, the boundary is on USB interface between main CPU and the modem. The modem is still “theirs”, but at least, we gain a foot hold. In L5 case, binary blobs (like memory initialization code) are now excursions from “their” territory into ours, and we have to tolerate them - for now. That’s also why the effort to deblob - because deblobbing increases our privacy and our security when it is done on our territory. Deblobbing may also help push the boundary further, behind the modem itself, where it should be in the first place.

PS. Who are “they” anyway?

“they” are OUR $ - expressed through VAT (owed at every purchase), combined annual tax and all the other bad purchases we make collectively throughout the world each milisecond 24/7/365 - @ OP - have you seen a stock market screen ?

I’ve not seen this before that’s crazy. I didn’t know it was so much data, mental.

not sure what you mean with stock market screen?

just checking if you’ve seen one …

still not sure what you want to express, if this was adressed to me

i just attempted to bridge what @Dwaff said above with my poor world-domination-economics explanation skills … if looking at those pictures has no effect in relation to what was already stated in this thread then i don’t know what more i could say to explain more … i don’t believe a TLDR would interest anyone here …

Just because you’re paranoid doesn’t mean they’re not really out to get you. I won’t be satisfied until myself and others have full access to both the schematics and to all code running on the phone. It’s probably too much for any one person to diasect. But the collective will ferret-out any and all privacy and security dis-respecting hardware and software violations. Once we gain some momentum, the next step will be to get the big SOC manufacturers to integrate consumer privacy and Security certifications in to their company-internal industry Quality compliance systems, where such compliance can be audited by independent third-parties. Anyone of them that wants to sell their products in to mainstream consumer markets or in to any part of that supply chain to those markets, would then be forced in to such compliance. That would guarantee rock-solid privacy and security compliance. If you’ve ever worked for a company with Automotive or Aerospace certifications, you’ll know that neither management nor the employees would ever endanger those certifications that allow their company to sell in to those markets when billions of dollars are on the line. Samsung, LG, and all of the others would have to follow suit if they want their phones or chips to end up in consumer devices since only those who voluntarily comply can obtain the certifications that the consumer damands in their products.

hehe … the more accurate term for our time here on earth would be … out-to-get-your-money … without my money just my body is worth a few cents (just a few valuable minerals in there - someone bothered to make a study at some point but can’t remember where to link to) lol

by the same analogy the software is the most important since without it hardware is just minerals … dumb vibrating minerals lol

I would like to see an independent test done by a third party instead of the company selling the product.

You could say blobless doesn’t equal privacy, but it is required to be able to know what you’re device does. A single binary blob present on your device can execute code that you can’t inspect. This is one of the worst problems in computing privacy, not being able to know what some piece of software running on your computer does. This COULD mean that it has backdoors that you don’t know about (especially if that code is running with elevated privileges), or that it sends data to some company that you might not aggree with (which can be checked with other applications scanning network traffic).

It might not equal privacy, but it is required to have a privacy respecting device. If it has binary blobs, it’s not secure.

i would insist that we call it not-trust-able since “not-secure” implies that we have PROOF if and what the ACTUAL backdoor does (active/dormant state if at all present).

the point is we don’t KNOW (outside of what Edward Snowden’s revealed through the press so far and what others have given us) what exactly we are dealing with but we do KNOW the scale and the effects just not everything just yet.

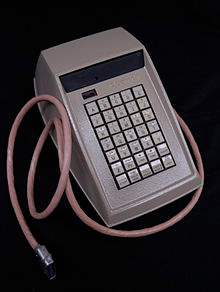

Like this Quotron II desk unit?

https://en.wikipedia.org/wiki/Stock_market_data_systems#/media/File:Quotron.jpg

Well there is proof, proof that there is a security risk.

You don’t have to prove that there is actually some application spying on you, when you can choose between fully free software or blobbed software.

Having such blobs is by itself a security risk, no matter what code it is executing, because of the way it obfuscates it’s contents.

In some way it’s kind of like worn tyres on a car. People consider those unsafe. Not because when your tyres are worn, your car crashes, but because of the risk of crashes increases the more worn your tyres are. It’s kind of like that for blobs, it’s not that every uninspectable binary blob runs malicious code, but because the risk of it increases the more blobs you have.

There’s a simple solution to the first: replace the tyres with new ones,

The second has the exact same solution, which is much harder in practice: don’t run proprietary software.